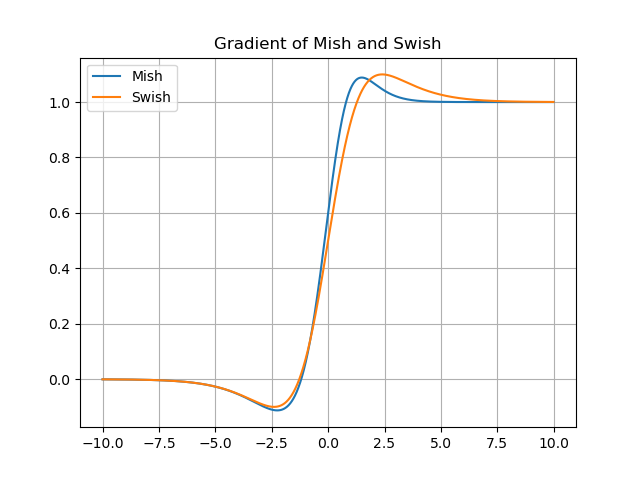

Below is a short explanation of the activation functions available in the tf.keras.activations module from the TensorFlow v2.10.0 distribution and torch.nn from PyTorch 1.12.1. from publication: TanhExp: A Smooth Activation Function with. Activation FunctionsĬurrently, there are several types of activation functions that are used in various scenarios. Download scientific diagram The first and second derivatives of TanhExp, Swish, and Mish. For 1, the function becomes equivalent to the Sigmoid Linear Unit 2 or SiLU, first proposed alongside the GELU in 2016. For this reason, the function and its derivative must have a low computational cost. The swish function is a mathematical function defined as follows: The swish function 1 where is either constant or a trainable parameter depending on the model. Formula : f (z) 1/ (1+ e-z) Why and when do we use the Sigmoid Activation Function The output of a. The derivative of the activation function feeds the backpropagation during learning. Sigmoid Activation Function - The Sigmoid Function looks like an S-shaped curve. This activation function will allow us to adjust weights and biases. When we started using neural networks, we used activation functions as an essential part of a neuron. Derivative function of Swish Conclusion Pre-requisite: Types of Activation Functions used in Machine Learning Overview of Neural Networks and the use of Activation functions The mechanism of neural networks works similarly to human brains. When you chain values that are smaller than one, such as 0.2 0.15 0.3, you get really small numbers (in this case 0.009). This paper introduces the circuit design details of the swish activation function and its derivative implemented using RRAM devices and passive components implemented on 28nm FD-SOI (Fully. Unlike ReLU, Swish is smooth and non- monotonic. Like ReLU, Swish is unbounded above and bounded below. Work done as a member of the Google Brain Residency program (g.co/brainresidency). Swish, Mish and Serf belong to the same fam-ily of activation functions possessing self-gating property.Like Mish, Serf also possess a pre-conditioner which resultsin better optimization and thus enhanced performance. where f(x) x (x) (1) (x) (1 + exp( x)) 1 is the sigmoid function. For a neuron to learn, we need something that changes progressively from 0 to 1, that is, a continuous (and differentiable) function. This problem primarily occurs with the Sigmoid and Tanh activation functions, whose derivatives produce outputs of 0 < x' < 1, except for Tanh which produces x' 1 at x 0. The swish activation function takes advantage from the sigmoid and ReLU non-linear functions for attaining maximum accuracy and speed for a neural network. We dene Serfasf(x) xerf(ln(1 +ex))whereerfis the error func-tion (1998). This value is unreal because, in real life, we learn everything step by step. The tangent of the activation function indicates whether the neuron is learning From the previous image, we deduce that the tangent at x=0 is \infty.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed